00:08:20

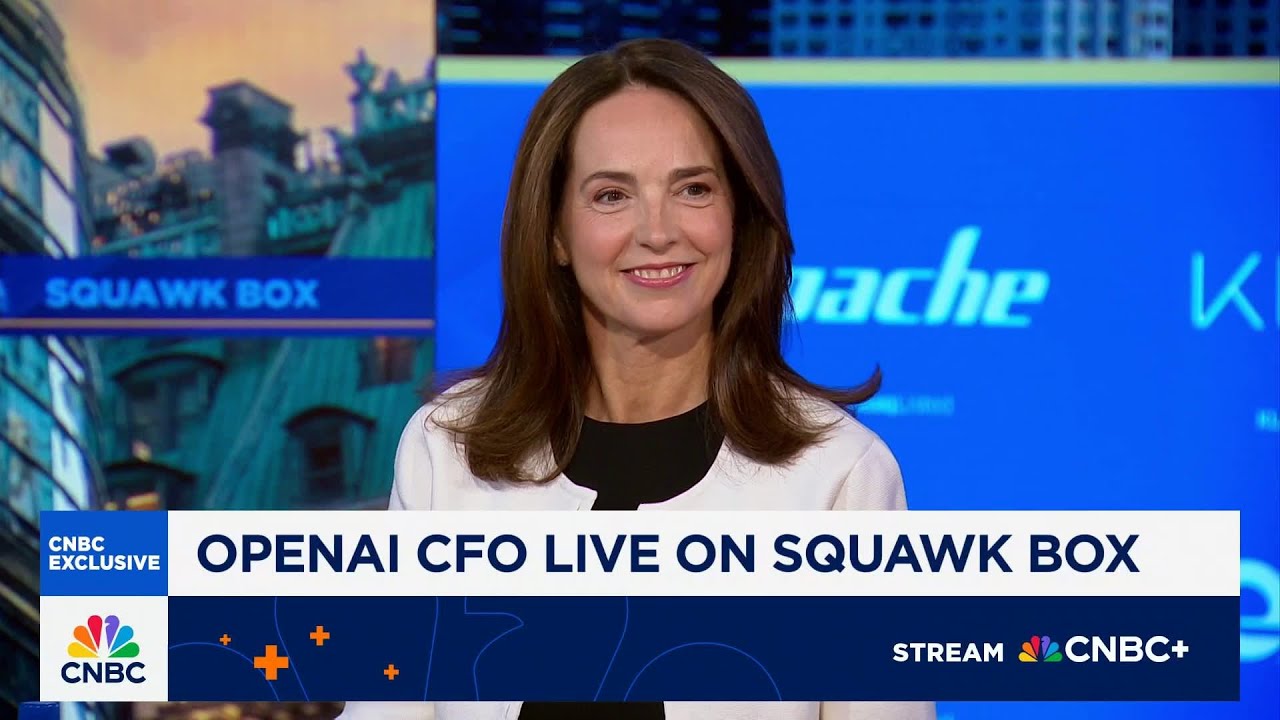

OpenAI CFO: "Constant Compute Shortage" Is Our Biggest Challenge in AI Infrastructure Buildout

Exclusive: Sarah Friar discusses computational constraints, ChatGPT-5 adoption trends, and why AI infrastructure parallels historic electricity expansions.

Persistent Compute Demands Drive AI Challenges

During a Washington World Forum interview, OpenAI CFO Sarah Friar identified computational limitations as the company's most significant operational bottleneck. "The biggest thing we face is being constantly under compute," stated Friar, highlighting the voracious GPU demands of AI systems as infrastructure developments like project Stargate accelerate.

ChatGPT-5 Adoption Exceeds Expectations

Despite initial performance concerns following launch, ChatGPT-5 has seen accelerated Plus/Pro subscriptions driven by 700M weekly active users. Personalization features like memory functions have increased user retention, while enterprise adoption has "really outperformed expectations" according to Friar.

📈 Enterprise & API traction: Business adoption has become OpenAI's strongest growth vector over the past six months through workplace integrations.

Developer Response Defines Key Capabilities

Developer token usage surged over 50% week-over-week, with critical metrics showcasing technical adoption:

- Agentic behavior token usage doubled in deployment scenarios

- AI reasoning capabilities saw 8x growth post-launch

Infrastructure: Railroad Analogy Better Than Gold Rush

Dismissing AI investment as speculative bubble, Friar cited physical infrastructure similarities with historic buildouts: "We're in the early innings... It's more like the railroads or the buildout of electricity than anything I've seen." Unlike mobile/web eras, AI demands unprecedented physical infrastructure scaling to match economic impact projections.

Competitive Moats Through Proprietary Data

In assessing startup viability, Friar outlined three competitive requirements:

- Specific problem targeting over hypothetical solutions

- Complex business process integration capabilities

- Unique data access, noting "90%+ of data resides behind corporate/academic walls"

Shifting Dynamics in Search Markets

OpenAI reports accelerating search market share growth through conversational functionality - doubling from 6% to 12% in six months. Friar notes conventional search metrics underestimate impact: "When you conduct conversational searches, 5-6 interactions constitute a single query rather than multiple instances."

Industry impact: Infrastructure constraints increasingly influence competition as computational capacity becomes a primary moat distinguishing AI leaders.